OpenClaw-RL is an RL server that allows users to deploy their personal models and continuously improve them through everyday interactions in OpenClaw. We propose an optimization method that combines the strengths of GRPO and on-policy distillation, turning the full interaction history among the model, the user, and the environment into reinforcement learning signals. We also design a set of interesting experiments that demonstrate the framework’s effectiveness in efficiently optimizing personal agents. Code repository: https://github.com/Gen-Verse/OpenClaw-RL

Speaker Bio

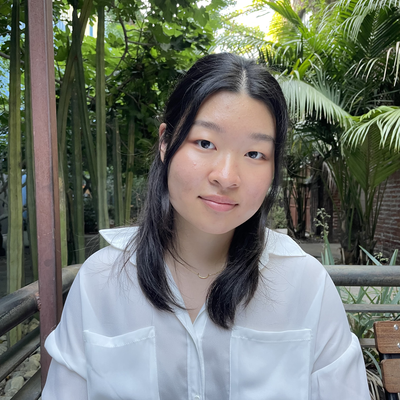

Yinjie Wang is a second-year PhD student at the University of Chicago and currently an intern at the Princeton University AI Lab. He graduated from the School of the Gifted Young at the USTC. His research focuses on large language models, AI agents, and RL methods for them. His works include RL frameworks for different application scenarios, such as OpenClaw-RL and RLAnything for agents, CURE for coders, and dLLM-RL for diffusion language model. His first-author papers have been published at top international conferences such as NeurIPS and ICLR, and one of his papers received a Spotlight at NeurIPS 2025.

More Details

- When: Tue 31 March 2026, at 1 - 2 pm (Brisbane time)

- Speaker: Yinjie Wang (UChicago & Princeton)

- Host: Ruihong Qiu

- Coordinator: Zijian Wang

- Zoom: https://uqz.zoom.us/j/89903354449 [Recording]

No.26-02 Simulating Real Users with State Alignment

No.26-02 Simulating Real Users with State Alignment